IP Sockets - Networking Fundamentals - Part 1

Table of Contents:

Introduction to Sockets

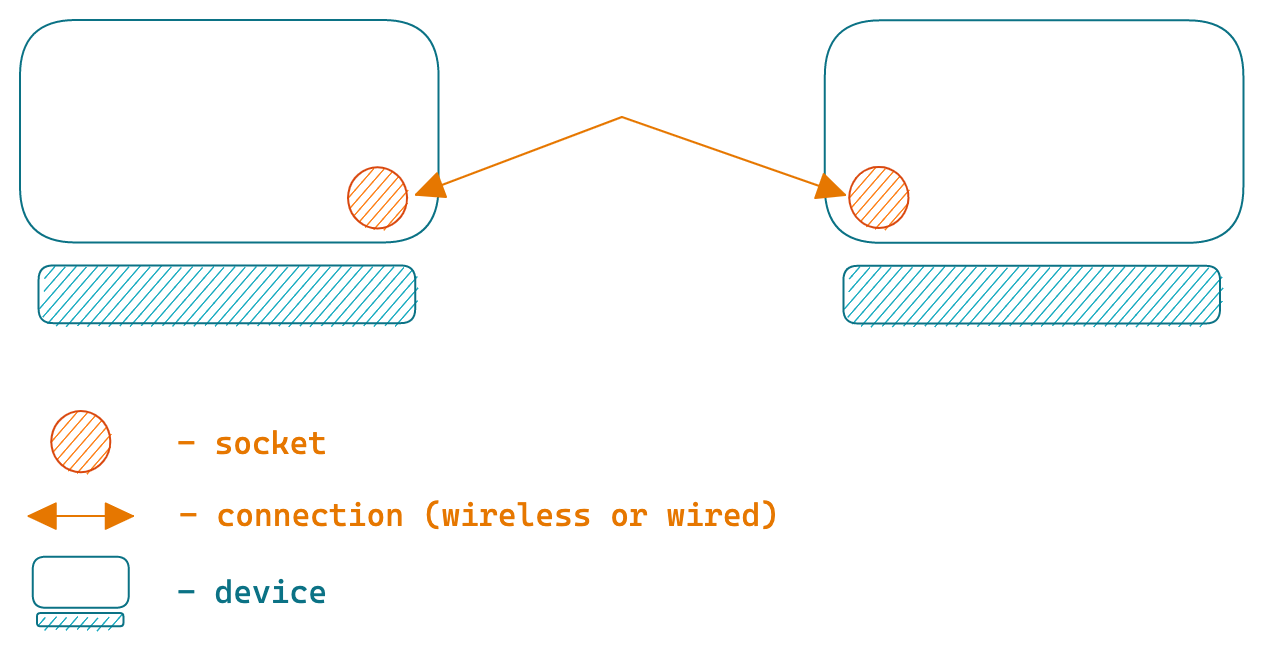

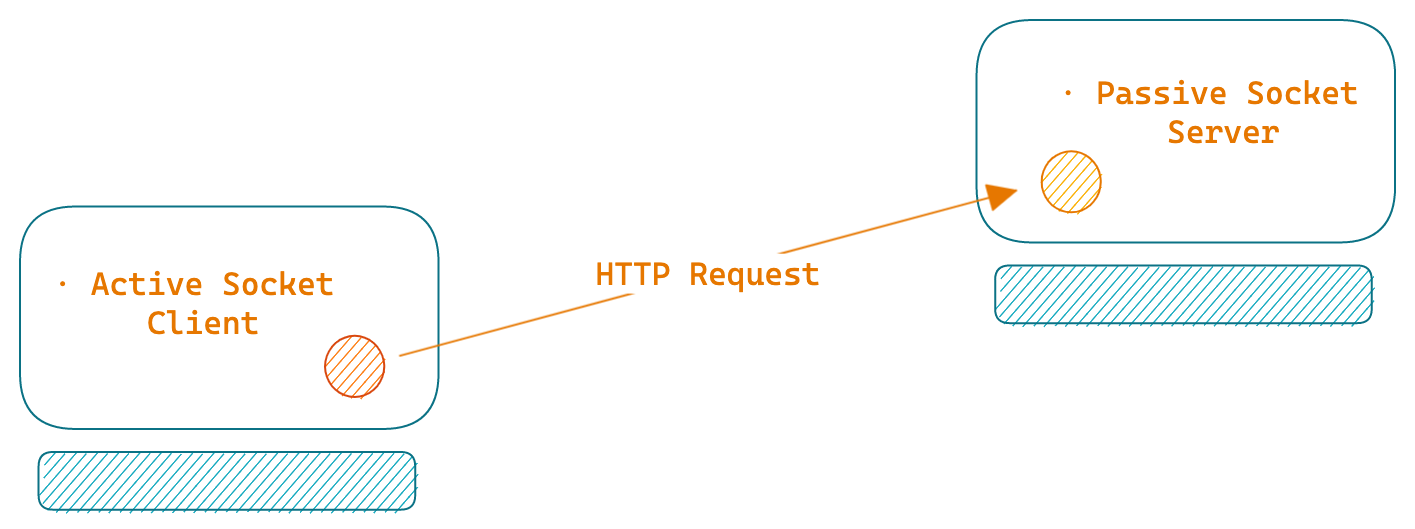

In an operating system, a socket is a virtual representation of one endpoint of a connection. Whenever a client establishes a connection with a server, it results in the creation of a socket on the client side and another one on the server side.

In other words, when you want to send data over a network, you write the data (bytes) to a socket. When you read data from a network, you read it from a socket.

Sockets can exist in two states, either active or passive. An active socket is used when a client initiates a connection request, such as an HTTP request. On the other hand, a passive socket is used to receive connection requests, for example, in an HTTP server.

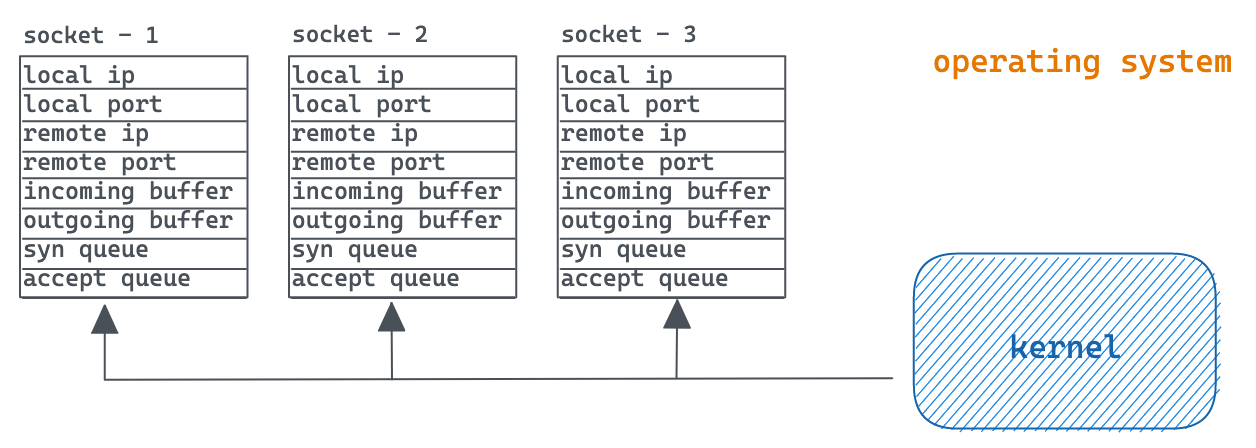

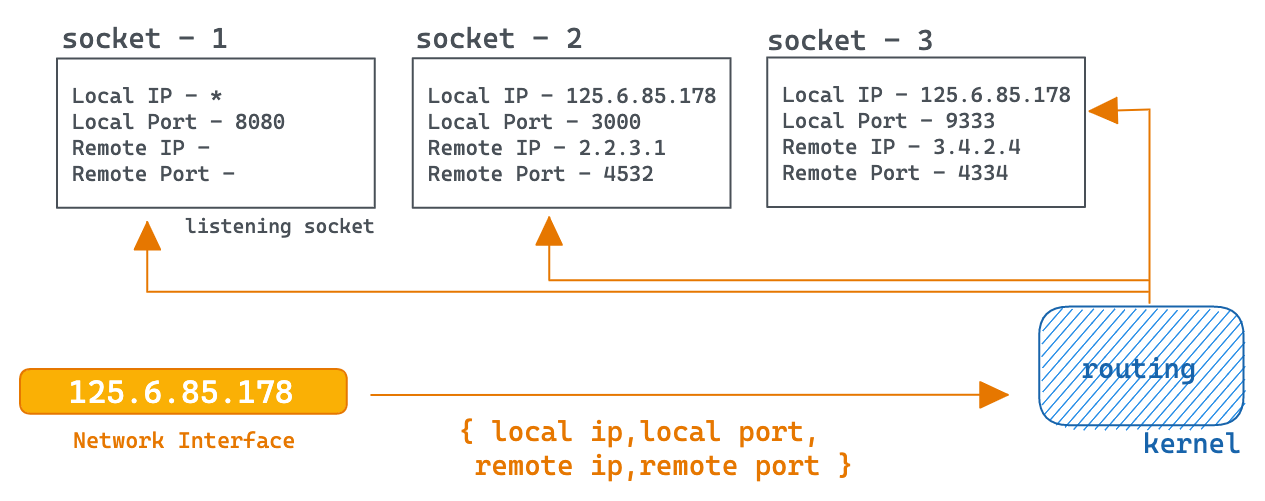

Internally socket is a data structure maintained by the kernel that holds information such as the local IP (of the connection), local port, remote IP, remote port, and incoming & outgoing buffers, etc. In UNIX, a socket is created through the execution of the socket() system call.

Typically, in order to listen for incoming connections server sockets use a well-known port number, such as port 80 for HTTP, 443 for HTTPS, 21 for FTP, or 27017 for MongoDB.

Similarly, when you initiate a connection such as an HTTP request, the client socket that gets created also requires a unique port. Although, client sockets use temporary, ephemeral ports assigned automatically by the kernel.

Socket Pair

A socket pair consists of four elements that play a crucial role in determining how incoming and outgoing traffic is routed through a system. These elements include the local IP, local port, remote IP, and remote port. Upon the arrival of a TCP segment, the TCP protocol (kernel) analyzes all four of these elements to route the segment to the correct endpoint (i.e., a socket).

A socket pair can be represented as:

{Local IP:Local Port, Remote IP: Remote Port}

For example, a server socket that listens on port 8080 and can receive requests from any IP can be denoted as:

{*: 8080, -:-}

Since this is a listening socket, the remote IP and port are both unspecified.

By setting the local IP to a wildcard (*), a server socket is capable of accepting incoming connection requests from any of the host’s network interfaces. Alternatively, using 0.0.0.0 instead of a wildcard allows for the same functionality.

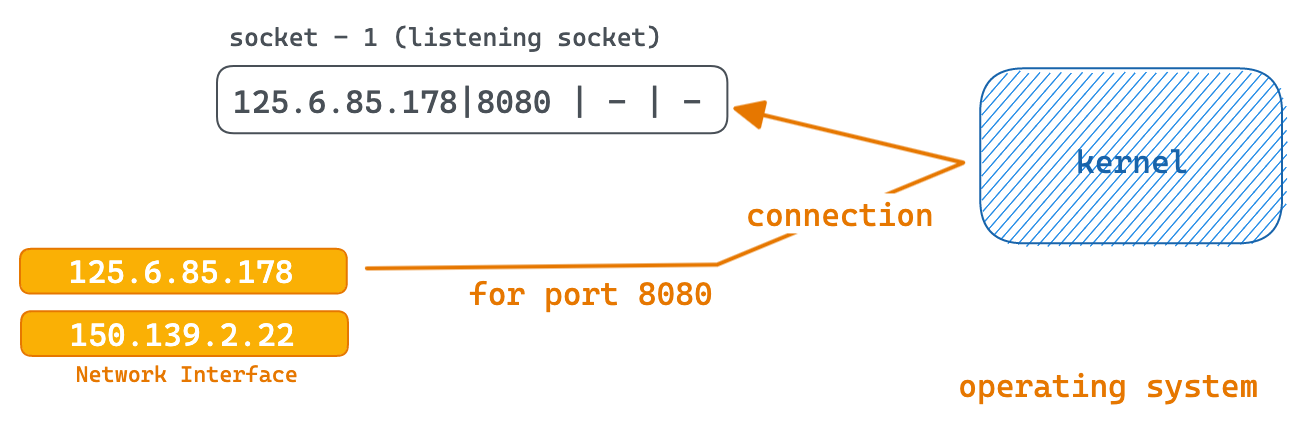

Suppose the host has two network interface cards (NICs) with two distinct IPs, such as 25.6.85.178 and 150.139.2.22, and we want the server to only accept connection requests sent to the IP 25.6.85.178. In this scenario, the socket pair’s local IP configuration can be set to:

{25.6.85.178|8080 | - | -}

When creating a socket in the listening mode you don’t have to specify values for the remote IP and remote port.

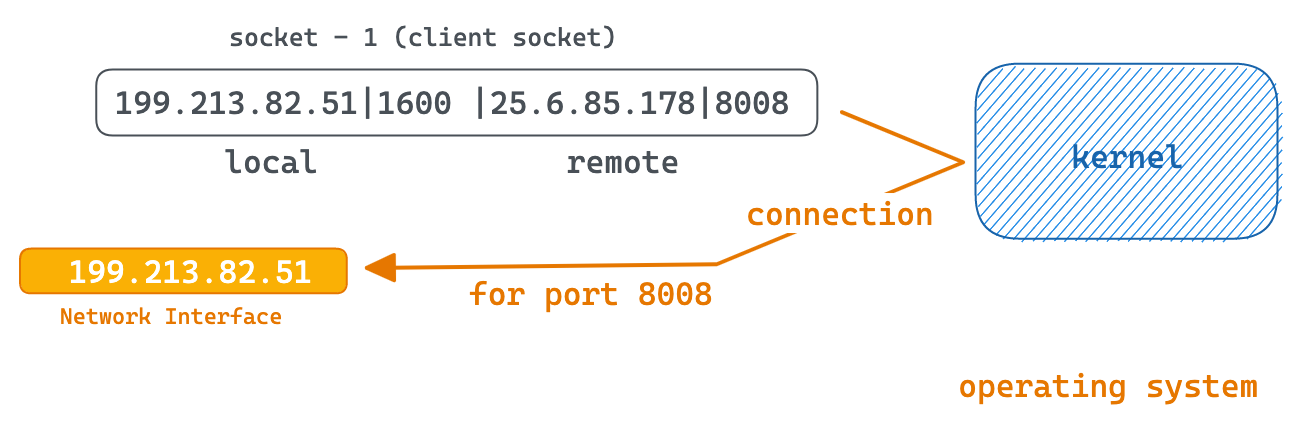

On the other hand, when creating a client socket the values for remote IP and port are required. The local IP for a client socket is not determined by the application code though. Instead, the kernel selects the local IP based on the outgoing network interface that will be used to connect to the other endpoint, typically a server. The local port is also automatically assigned by the kernel (TCP) and is typically a short-lived, reusable port that is freed after the request is completed and the socket is closed.

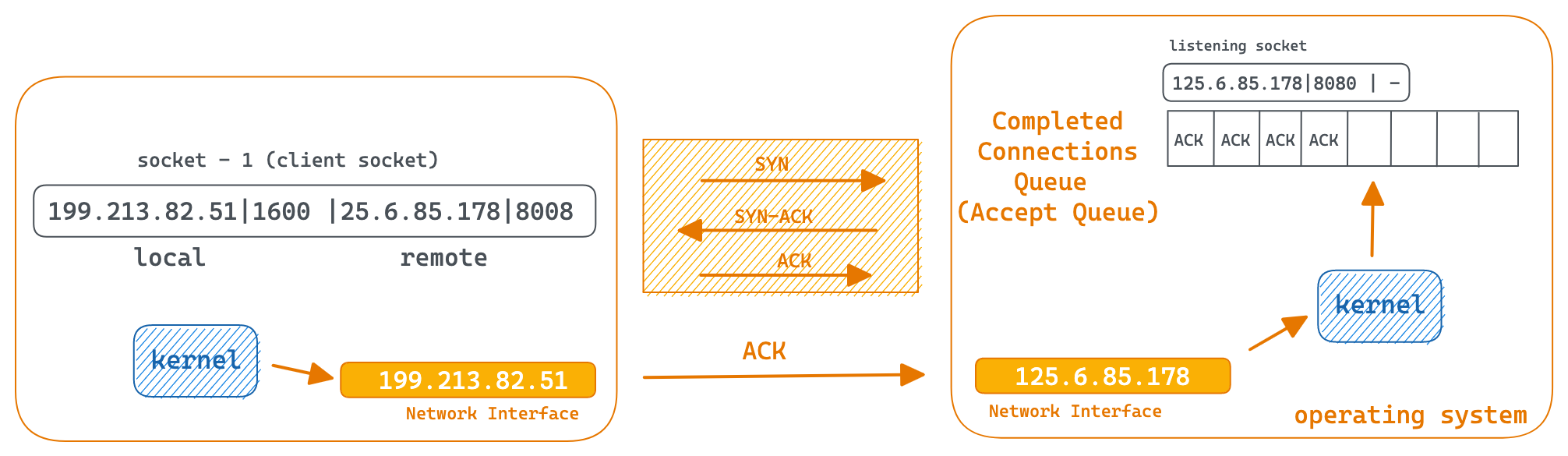

For example, if a host with IP 199.213.82.51 wants to connect to a server with IP 25.6.85.178 on port 8008 and the ephemeral port assigned (by the kernel) to it is 1600, the client-side socket pair would look something like:

{199.213.82.51|1600 | 25.6.85.178|8008}

Creating A Server Socket

The process of creating a server socket begins with a system call to create a new socket. The socket() call returns a socket descriptor, also known as a file descriptor.

The next step is to bind the socket to a local IP and port using the bind() system call. Since this is a server socket, the remote IP and port do not need to be specified.

Afterwards, you must execute the listen() system call to transition the socket to a listening state, enabling it to actively await incoming connection requests.

Following creation, a socket can take one of two routes: it can either become a server socket if you issue the

listen()system call or it can become a client socket if you issue theconnect()system call.

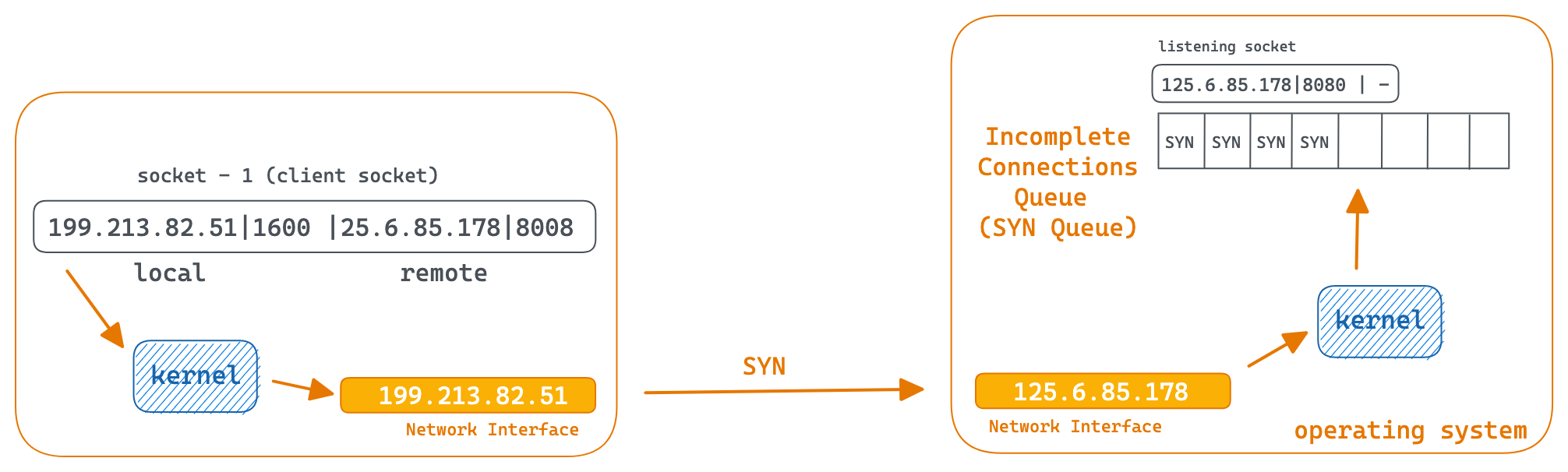

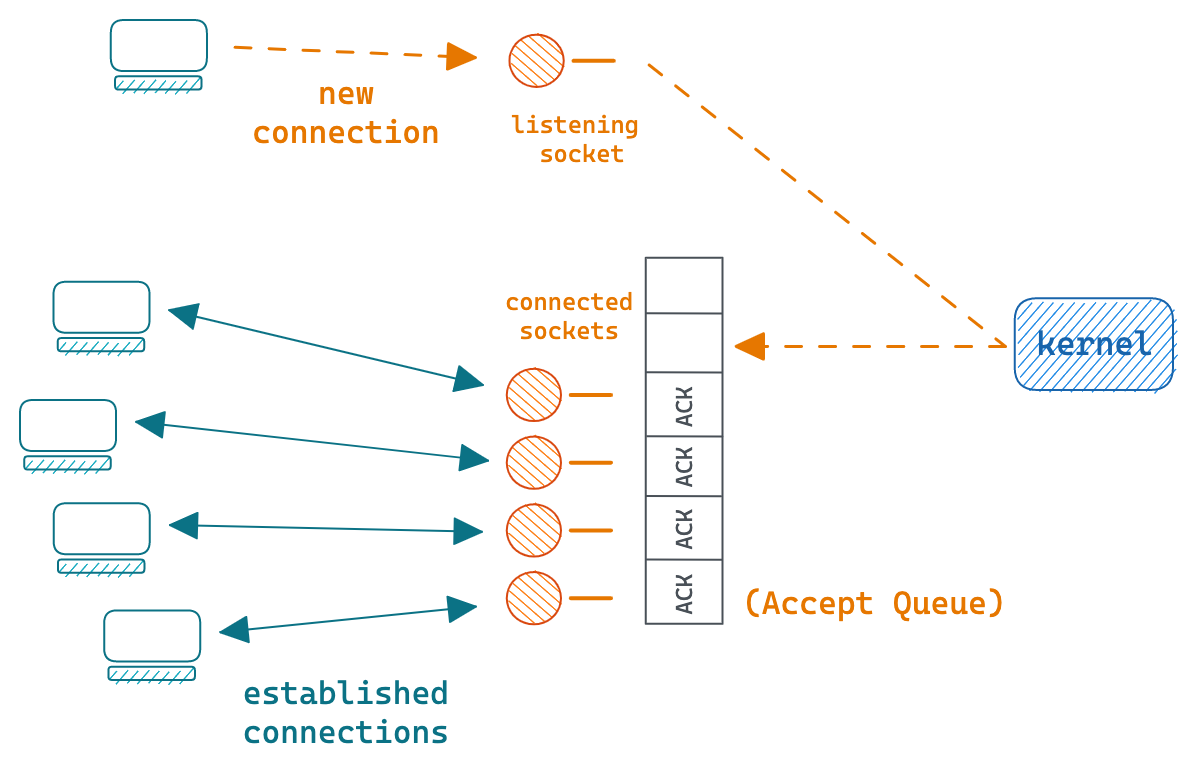

Kernel maintains two queues for each socket.

The SYN queue: Also known as the incomplete connection queue, which temporarily stores connection requests that have not completed the three-way handshake. Upon receiving a SYN request from a client socket, the kernel creates an entry in the incomplete connection queue and proceeds to initiate the next segment of the three-way handshake process.

The Accept queue: Also known as the completed connection queue, which contains requests that have successfully completed the handshake and have been assigned a socket by the kernel. Once the handshake is successfully completed, the entry is then moved from the SYN queue to the Accept queue.

It is worth noting that if a server receives 100 requests, it will create 100 distinct sockets** to handle each request. These 100 sockets are separate from the listening socket, which merely listens for incoming connection requests. Once a request is received, the kernel assigns a new socket to handle the request so that the listening socket can continue to listen for additional connection requests.

The same is true for client sockets i.e if you initiate 100 connection requests, the system will create 100 client sockets.

For both the client and server sockets, the kernel may not necessarily create 100 brand new sockets for 100 requests, as it is possible for it to reuse sockets that are no longer in use.

The server application can retrieve the connection from the Accept queue by calling the accept() system call. If the queue is empty, the call will block until a new request arrives, otherwise, the connection at the front of the queue is returned. This socket is used to engage in communication with the client, and once the communication is concluded, the socket can be closed using the close() system call.

Server Client Example

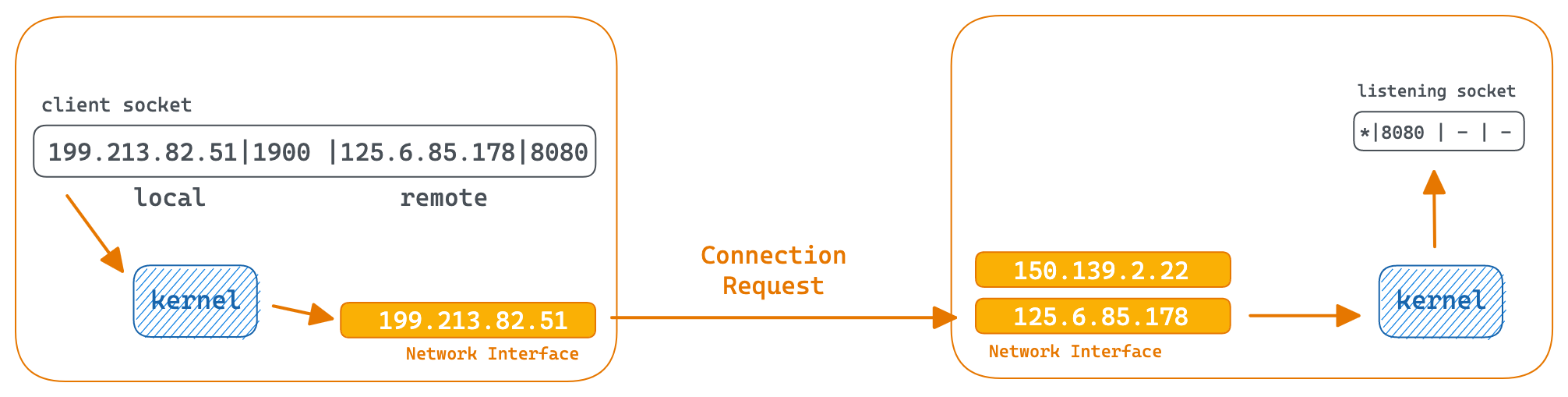

Let us consider an example to demonstrate how sockets operate.

Suppose we have a host machine with two network interfaces, each with its IPs: 125.6.85.178 and 150.139.2.22. The server application is started on port 8080.

The socket pair for the listening socket can be denoted as:

{Local IP: Local Port, Remote IP, Remote Port}

{* : 8080, -:-}.

The server is now ready to listen for connections on port 8080.

On the other hand, a client with an IP 199.213.82.51 initiates a connection with the server’s IP 125.6.85.178. We’ll assume the ephemeral port assigned to the client socket is 1900.

The socket pair for the socket on the client can be denoted as:

{Local IP: Local Port, Remote IP, Remote Port}

{199.213.82.51: 1900, 125.6.85.178 : 8080}

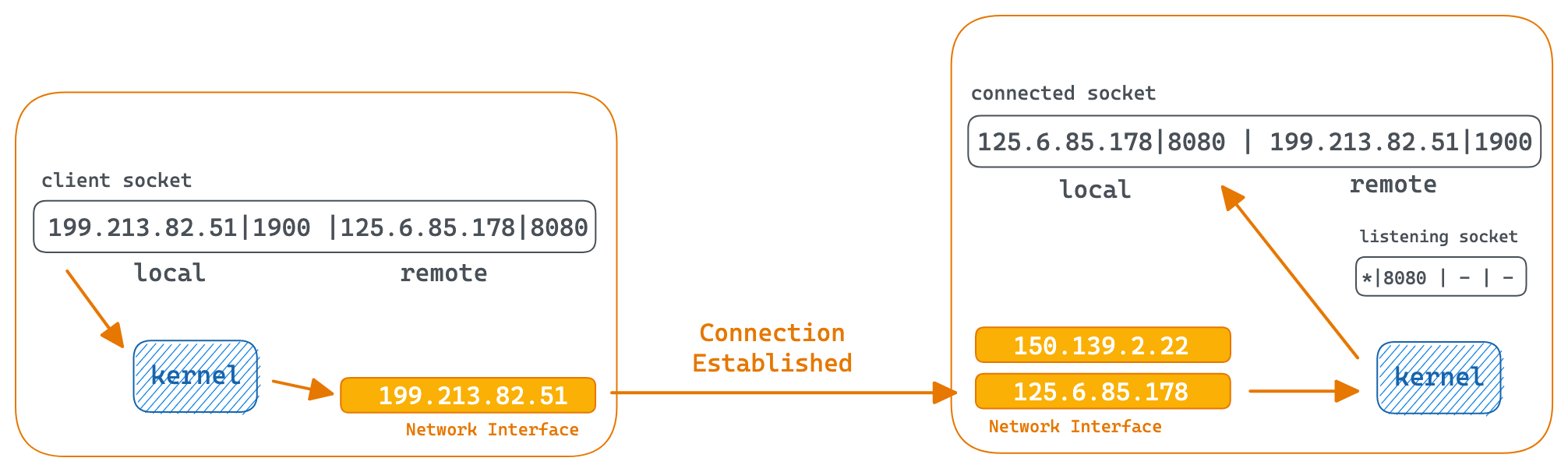

The kernel receives the connection on the listening socket, completes the three-way-handshake and assigns a new socket to handle the connection. The socket pair for the new socket can be denoted as:

{Local IP: Local Port, Remote IP, Remote Port}

{125.6.85.178 : 8080, 199.213.82.51 : 1900}

For the new socket created on the server side, the local IP is determined by the network interface that received the request (125.6.85.178), while the local port is equal to the port of the listening socket. The remote IP (199.213.82.51) and port (1900) correspond to the IP and port of the client socket.

It’s important to note that the port number 8080 is used by both the listening socket and the connected socket. This is because the kernel utilizes all four elements of the socket pair (local IP, local port, remote IP, remote port) to route a TCP segment and not just the port number.

When a TCP segment arrives at 125.6.85.178 and port 8080 from 199.213.82.51 and port 1900 it is delivered to the connected socket. All other TCP segments are delivered to the listening socket.

Conclusion

In the second part of this blog, we will delve into the details of the interaction between Node.js and Unix sockets, and explore the role played by the event loop when handling multiple sockets on a primarily single-threaded platform.

Sockets are the backbone of network operations, serving as the basic building blocks that enable the exchange of information between multiple systems. While Sockets may not be essential for everyday applications, having a solid grasp of their functionality can be advantageous when it comes to fine-tuning network-intensive programs.